Mastering Prompt Engineering: A Practical Guide to Getting Better Results from AI

Prompt engineering sounds straightforward. You tell a language model what you want, and it delivers. In practice, the gap between a casual instruction and a prompt that consistently produces reliable results is substantial.

The challenge isn't complexity for its own sake. It's about understanding how these models interpret instructions, where they need clarity, and which techniques actually move the needle.

The Anatomy of a Working Prompt

Most prompts contain four elements. A task description explains what you want done. Context provides background information. Examples demonstrate the desired format or approach. The concrete task is the specific question or instruction.

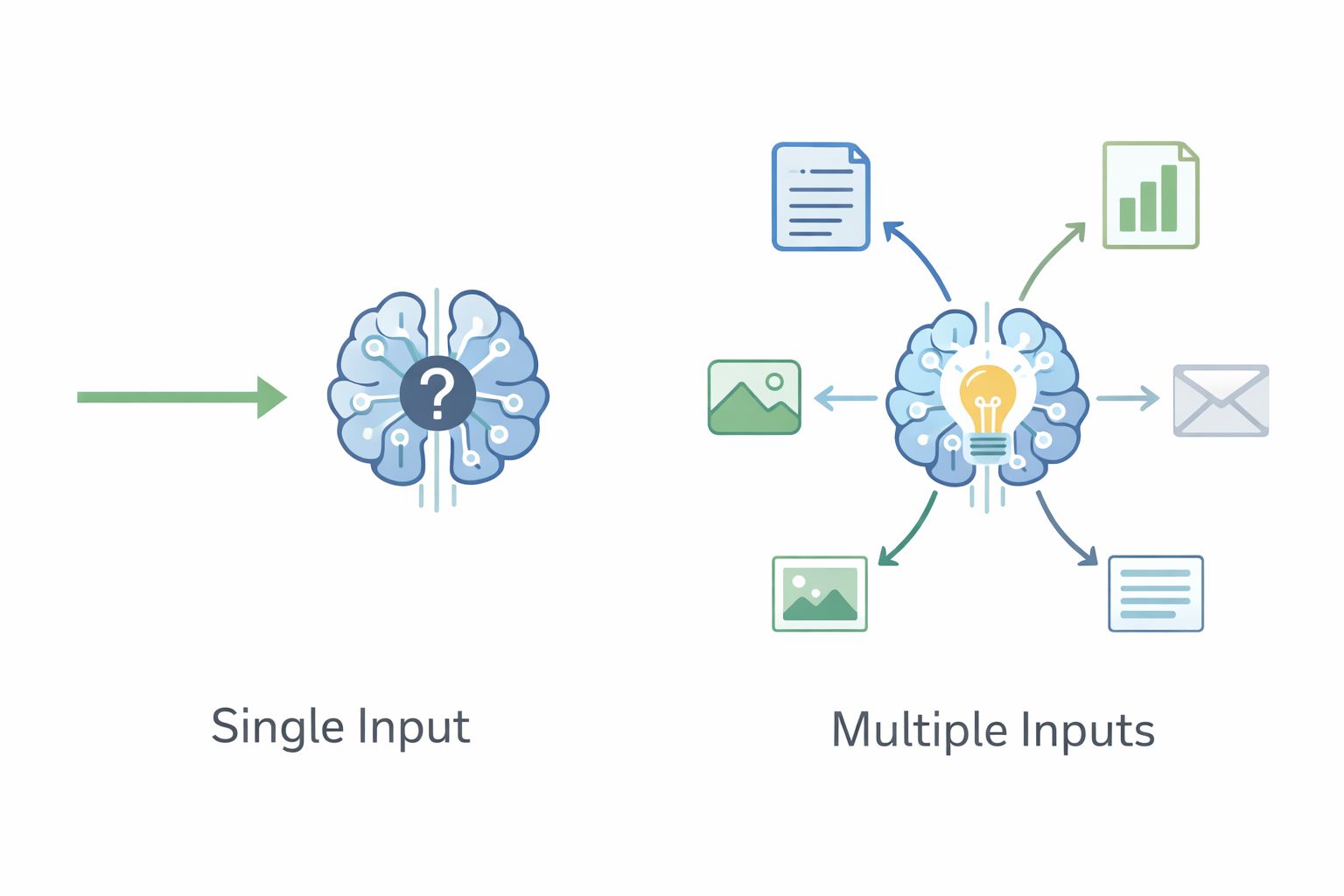

Most API systems separate these into system prompts and user prompts. System prompts define overarching behavior, roles, or constraints that persist across interactions. User prompts contain the actual work to be done.

How Language Models Actually Process Your Instructions

Models learn from examples provided in the prompt itself without changing their underlying parameters. This is called in-context learning. They recognize patterns in your examples and apply them to the task at hand.

Models pay more attention to the beginning and end than the middle. This isn't a flaw, it's how attention mechanisms work. Place critical instructions where they'll have the most impact.

The number of examples you need depends on task complexity. Simple tasks often work fine with no examples. Complex tasks benefit from multiple demonstrations. Finding the right balance between examples and costs matters.

Core Techniques That Actually Work

Zero-Shot Prompting

Zero-shot prompting provides instructions without examples. It works well for straightforward tasks where the desired outcome is clear. Translation, summarization, and basic classification often work fine this way.

The advantages are obvious. Fewer tokens mean faster responses and lower costs. Prompts are easier to write and maintain. The downside is limited control over formatting and tone. When precision matters, zero-shot often isn't enough.

Best practices: Be explicit about what you want. Define the output format clearly. State any constraints upfront.

Chain-of-Thought Reasoning

Chain-of-thought prompting asks the model to show its reasoning before providing an answer. This significantly improves performance on math problems, logical reasoning, and multi-step tasks.The model breaks down complex problems into smaller steps, reducing errors and making outputs more transparent. The trade-off is increased token usage and longer response times. Use chain-of-thought when accuracy matters more than speed.

Role Prompting

Assigning a role or persona influences how the model approaches a task. A prompt asking for analysis from a "senior financial analyst" will differ from one asking for "an explanation suitable for beginners."

Specific roles work better than vague ones. "Act as an experienced technical writer who specializes in API documentation" produces more consistent results than "act as a writer." The more precisely you define the role, the more reliably the model adopts that perspective.

The Reality of Effective Prompting

Effective prompt engineering isn't about memorizing techniques or following rigid formulas. It's about understanding how these models interpret instructions and crafting prompts that consistently produce reliable results.

The core techniques covered here work across different models and use cases. Zero-shot prompting for simple tasks. Few-shot when you need to demonstrate patterns. Chain-of-thought for complex reasoning. Role prompting to establish perspective. Prompt chaining for reliability in complex systems.

Master these fundamentals. Test systematically. Iterate based on real results. The gap between prompts that sometimes work and prompts that reliably deliver closes with practice and honest evaluation of what actually produces results.

Unitl next time,